Film industry is becoming more and more advanced every day, and with the help of still developing artificial intelligence (AI) it is becoming a transformative force, both encouraging creative endeavors and raising ethical concerns. One company at the forefront of this AI revolution is StoryFit, whose CEO, Monica Landers, navigates the delicate balance between technological advancement and the essence of human storytelling.

A StoryFit Revolution

StoryFit’s innovative use of AI extends beyond script analysis; it delves into the intricate nuances of audience connections with narratives and characters. The company compiles data on storytelling elements, offering insights that help studios in making important decisions like script acquisition, character promotion, and/or movie’s adaptations of books.

Originally designed to assist publishers in shifting through book submissions, StoryFit redirected its focus to the film industry, becoming the drive behind successful films and TV series. The company’s AI technology evaluates a script’s marketability, providing valuable data on the potential success of high-risk film investments.

Unveiling Character Dynamics

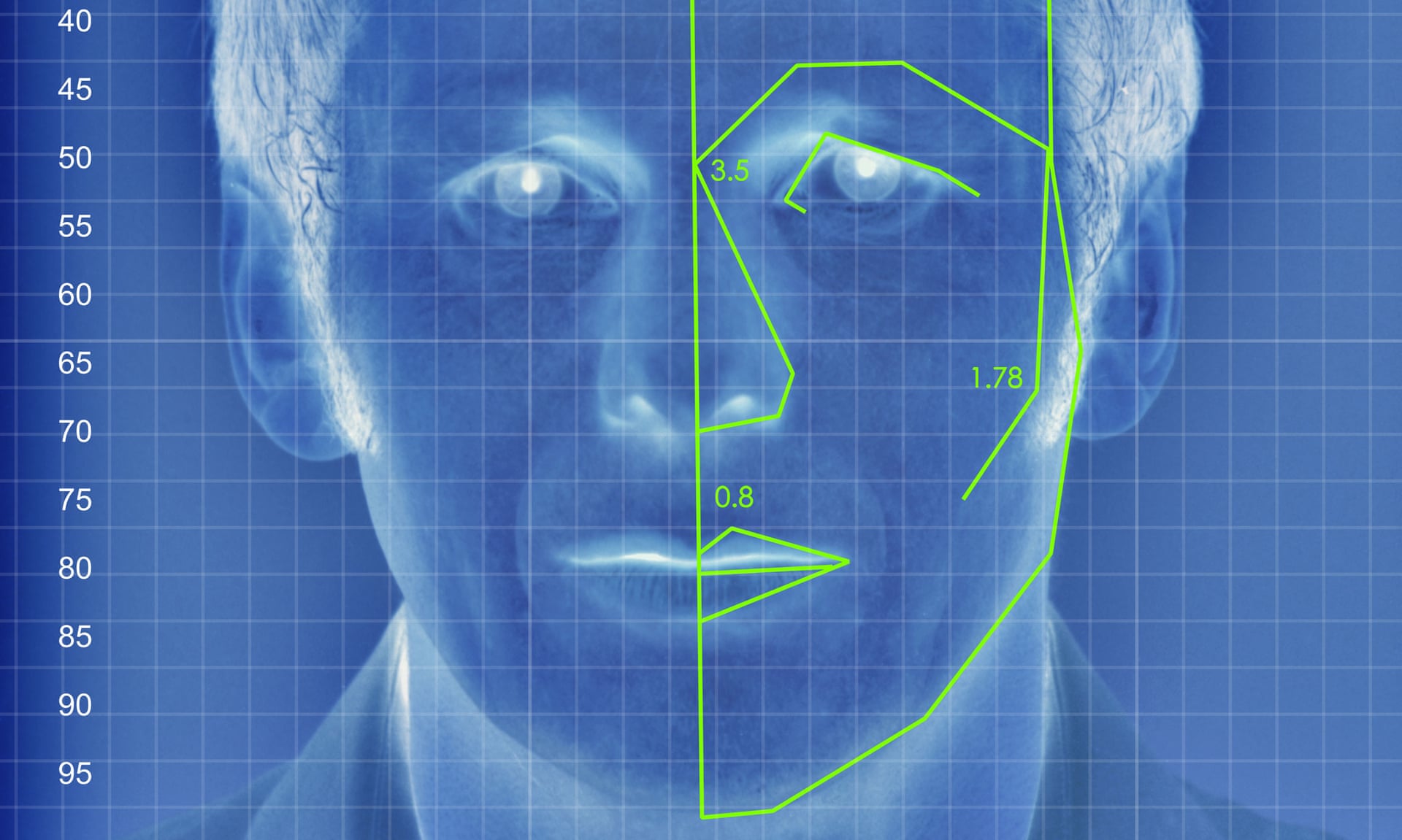

One of StoryFit’s remarkable achievements lies in its ability to analyze character traits using AI. By assessing audience responses, the technology determines the heroism or relatability of main characters, aiding creative professionals in identifying and improving potential imbalances.

The company’s application of AI extends to beloved TV series like “The Queen’s Gambit” and HBO’s “The Last of Us,” where it measures characters’ strength and originality. This data-driven approach not only celebrates exceptional storytelling but also serves as a tool to navigate the high-stakes film industry.

AI’s Influence Beyond Storytelling

As AI permeates various facets of filmmaking, concerns arise about its impact on content creation, especially in nonfiction and documentary spaces. The filmmaking industry is in dire need of advocacy for protections against AI and the establishment of ethical guidelines in decision-making processes.

The dark side of AI shows in the form of black box algorithms that dictate popularity, influencing which stories get told and how. Social media platforms, particularly TikTok, reward content tailored to algorithms designed to trigger dopamine release. In Hollywood, producers secure lucrative deals by catering to AI-driven decision-making processes at studios and streaming platforms.

Documentary Filmmaking at Risk

The article underscores the vulnerability of documentary filmmaking to AI curation, where decisions based on data shape content exposure. It indicates the potential loss of human curation, transparency, and accountability as algorithms decide what projects to buy and how to create them.

Filmmakers and industry veterans express concerns about AI decision-making authority, potentially leading to risk aversion and a decline in innovative content. The ethical dilemmas surrounding deepfake technology, question the trustworthiness of content and the preservation of nonfiction storytelling’s integrity.

CONCLUSION

Even as AI demonstrates its value in boosting creativity and decision-making, it has too much authority. There is necessity to uphold human judgment, accountability, and openness in an industry that progressively depends on insights generated by AI.

In summary, there is a pressing need to safeguard the authenticity of nonfiction storytelling, placing a high value on truth and trust. With the ongoing integration of AI into filmmaking, maintaining a robust moral foundation rooted in principles like honesty and respect is essential to establish a balanced and cooperative relationship between technology and storytelling driven by humans.

AI Is Coming for Filmmaking: Here’s How – The Hollywood Reporter

Can Artificial Intelligence Help The Film Industry? It Already Is. (forbes.com)