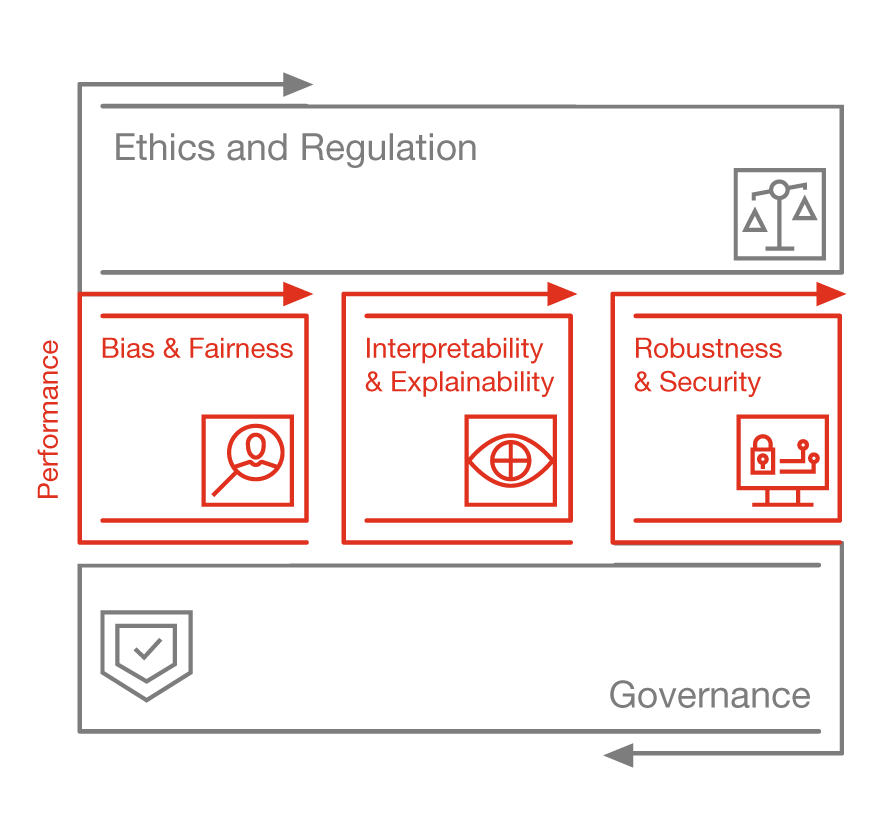

© inserted from twitter, Original image from EU Ethics Guidelines for Trustworthy AI

Artificial intelligence is one of the fastest growing fields today. It is currently being used in several disciplines, across the globe. However, this technology needs to be monitored to prevent any bias or negative impacts from affecting the world.

Responsible AI is developed to help prevent any harmful implications of AI, by having policies related to bias, ethics and trust. It is relatively new; however, many companies are favoring its incorporation into their infrastructure. Responsible AI caters to managing and regulating intelligent systems, to make sure they do not harm the society.

There are three major contributors to consider while determining if a certain piece of AI tech is suited for the society:

- Awareness

Awareness of the accountability of AI research and development is necessary. That is, who is to blame if an intelligent machine makes an error? The research should be capable of determining the possible effects of releasing a system into the world.

- Reason

AI algorithms learn from the data they receive. However, they should be capable of reasoning and justifying their actions.

- Transparency

Transparency is required to make sure people know what a particular intelligent system does, and what it is capable of. It requires governance to ensure it delivers societal good.

© Image Inserted from pwc.com

Responsible AI research is being done across different platforms to devise rules and regulations to govern AI. RRI (Responsible AI Research and Innovation) is an interactive and transparent process that holds individual or groups of innovators responsible for the acceptability, desirability and sustainability of a certain technology in the society. It can be implemented between different parties using the following approaches:

- Permanent individuals/groups from different backgrounds can discuss the innovation and its possible outcomes. This includes ethical review boards within organizations.

- Set of rules and guidelines that should be followed by the outcomes of research and innovation, so its ethical, legal and safe.

- Code of conduct detailing on the behavioral choices for stakeholders in different sectors

- Industry standards that set a minimum threshold for the safety required for testing and development of new technology.

- Approaches and methods of keeping track of the future impacts of a particular technology like scenario planning and modeling.

Since AI is prone to biases and misjudgments, RRI can ensure the technology is being utilized for the ultimate good of the world. At the end, human input is required to make sure technology is not going against humanity and that the processes are following the ethics, trust and bias standards. This improves accountability and promotes a better public image of such systems.

Resources: